Colorado legislature pushes AI rules targeting health care, therapy and chatbots

With just days left in the 2026 legislative session, Colorado lawmakers are rushing a trio of artificial intelligence bills to Gov. Jared Polis, aiming to set some of the state’s first guardrails on AI in health care, therapy, and consumer-facing chatbots.

As technology advances at breakneck speed, lawmakers are scrambling to establish regulations, particularly regarding minors and health care.

Regulating insurance companies

House Bill 1139, sponsored by Reps. Junie Joseph, D-Boulder, and Sheila Lieder, D-Littleton, and Sens. Lisa Cutter, D-Evergreen, and Lindsey Daugherty, D-Arvada, would prohibit health care insurance companies from basing coverage decisions solely on group data collected by AI systems.

The bill also requires insurance companies’ AI systems to consider a patient’s medical or clinical history, along with other important factors, in coverage decisions.

“Artificial intelligence systems are increasingly being used in utilization management and related processes that can influence whether care is approved or denied,” Lieder told House colleagues.

While artificial intelligence can improve consistency and efficiency, it also raises questions of accountability, she said, asking what oversight exists and who is responsible when automated systems make decisions.

The bill passed through the House on a 47-15 vote, with 13 Republicans and two Democrats opposed. In the Senate, three Republicans voted against it.

“This bill ensures that AI can support health care, but it can’t replace the human judgment, accountability, and compassion that patients deserve,” said Daugherty.

AI in therapy

A second measure, House Bill 1195, targets the use of artificial intelligence in psychotherapy services. The measure, sponsored by Reps. Gretchen Rydin, D-Littleton, and Javier Mabrey, D-Denver, and Sens. Judy Amabile, D-Boulder, and Kyle Mullica, D-Thornton, would prohibit therapists and social workers from using artificial intelligence to give recommendations or treatment plans to clients without clinician review.

The bill also requires therapists to have clients’ consent before using artificial intelligence to record or transcribe sessions, and prohibits individuals from offering psychotherapy services unless they are a regulated professional.

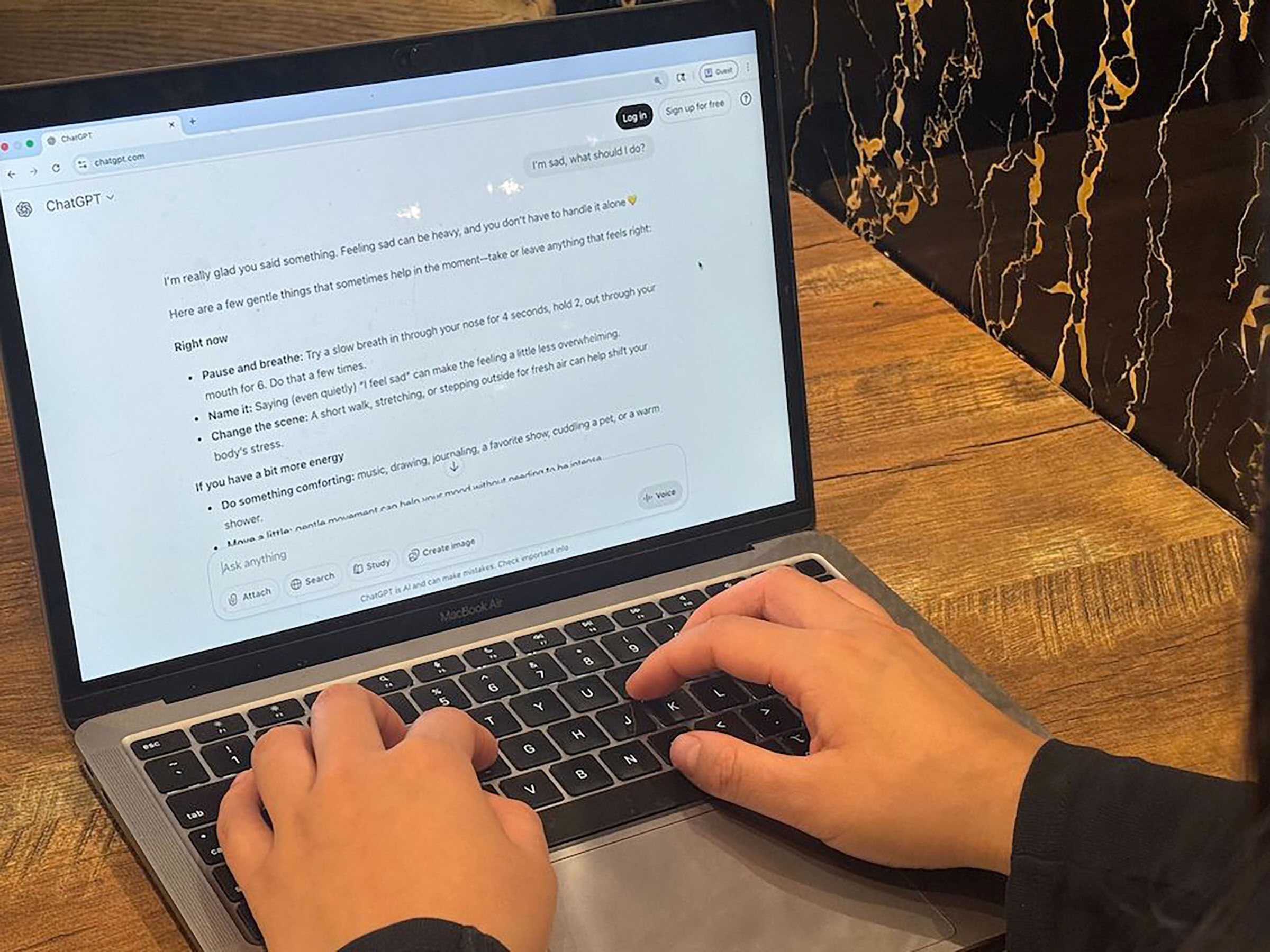

According to Mabrey, one in eight patients between 12 and 21 years old has said they use AI chatbots for mental health advice, and a third of adults say they’d be comfortable consulting with a chatbot about their mental health rather than a therapist.

Chatbots are designed to please users and keep them engaged, Mabrey said, which can be particularly concerning in mental health crises.

“They mirror emotional tone rather than challenge it,” he said.

Rydin, who is a licensed clinical social worker and therapist, said her profession is regulated for a reason.

While she acknowledged that there are “perfectly appropriate” places for AI in therapy, chatbots will never be able to replicate the relationship between a therapist and their client.

The bill passed through the House unanimously.

Speaking before his colleagues in the Senate, Mullica said HB 1139 was “a good bill that will make sure that the folks of Colorado who are receiving this care get it from the people they should be getting it from and not a chatbot.”

The measure passed the Senate on a 33-2 vote, with Colorado Springs Republicans Larry Liston and Lynda Zamora Wilson voting against it.

Regulating chatbots

Lawmakers are also targeting conversational artificial intelligence platforms, or chatbots, hoping to place regulations for situations where users are discussing self-harm or sexually explicit scenarios.

Sponsored by Reps. Sean Camacho, D-Denver, and Javier Mabrey, D-Denver, and Sens. John Carson, R-Highlands Ranch, and Iman Jodeh, D-Aurora, House Bill 1263 requires chatbot services to inform users that they are communicating with artificial intelligence, prohibits operators from providing minors with points or rewards that encourage engagement with the service, and requires operators to enact “reasonable measures” to prevent chatbots from producing sexually explicit material or statements that “simulate emotional dependence.”

The bill also requires tech companies to provide tools for users to manage privacy and account settings.

Additionally, it mandates chatbot operators to implement a protocol for user prompts that include mentions of suicidal ideation or self-harm and prohibits operators from stating or implying that any information provided by a chatbot is endorsed by or equivalent to services provided by a licensed professional.

According to Jodeh, two-thirds of American teens use conversational AI, and about one-third use it daily.

Several parents of children who died by suicide after conversing with chatbots spoke out against the bill, including Lori and Avery Schoott, whose 18-year-old daughter Annalee took her own life in 2020.

The Schotts live in Sen. Byron Pelton’s district, and his brother-in-law was her principal, the Sterling Republican said.

Pelton read a letter he received from Lori and Avery, alleging that parents weren’t involved in drafting the bill. The Schotts argued the bill was essentially written by big tech without any input from those who have been directly harmed by AI chatbots.

The letter added, “Legislation must protect children, and not create a false sense of safety to parents. This opens doors for tech to self-regulate and shield tech from liability. It is painfully obvious that a chair and a microphone were readily available for Big Tech and it’s infiltrating the halls of our Capitol while Colorado grieving families were left outside the door.”

Through tears, Pelton said, “I appreciate that we’re trying to fix this, but parents like Lori and Avery Schott went through a lot in our community. I know that there’s a lot of things we can do, and I know that we have to negotiate, but I just would like the parents to be heard, parents like Lori.

Several senators said the bill is too vague, particularly in its requirement that chatbot developers institute “technically feasible measures” to prevent chatbots from producing sexually explicit content or statements that simulate emotional dependence.

“I agree that we need to do something about chatbots; I completely agree,” said Sen. Lisa Frizell, R-Castle Rock. “The harm they are doing to our children is extraordinary.”

However, Frizell said she felt the legislation, as written, was giving tech companies a pass.

“They can’t run legislation absolving themselves of responsibility,” she said. “For a technology company to say that it’s okay for them to take ‘technically feasible measures’ with software that they have created… they have the responsibility to control it, and if they can’t, then we have a much bigger problem.”

Sen. Dylan Roberts, D-Frisco, said amendments removing certain sections of the bill made the measure “meaningless” and “are basically allowing the tech companies to write the rules for themselves.”

Because the bill doesn’t include a definition of “technically feasible” and none exists in statute, developers will be the ones to decide what is and isn’t technically feasible.

“This bill would allow them to have that immunity,” Roberts said. “It doesn’t make sense to have the words ‘technically feasible’ and then not define what that means… the way the bill is currently written does not get it right, and it will not do anything.”

Sen. Matt Ball, D-Denver, disagreed with Roberts and Frizell. While he admitted the bill wasn’t perfect, it’s far better than what’s in statute now, which is nothing.

The bill passed on a 40-24 vote in the House, with all Republicans and two Democrats — Kyle Brown of Louisville and Jacque Phillips of Thornton — voting in opposition.

Votes were more split in the Senate; the bill received a 24-11 vote in its second chamber.